AbstractSometimes your greatest strength can emerge as a weakness if the context changes.

Harsha Bhogle

Cricket commentator and journalist

The maturity of living labs has grown over the years and researchers have developed a uniform definition by emphasizing the multi-method and real-life, contextual approach. The latter predominantly focuses on the in situ use of a product during field trials where users are observed in their everyday life. Researchers thus recognize the importance of context in living labs, but do not provide adequate insights into how context can be taken into consideration. Therefore, the contribution of this article is twofold. By means of a case study, we show how field trials can be evaluated in a more structural way to cover all dimensions of context and how this same framework can be used to evaluate context in the front end of design. This framework implies that living lab researchers are no longer dependent on the technological readiness level of a product to evaluate all dimensions of context. By using the proposed framework, living lab researchers can improve the overall effectiveness of methods used to gather and analyze data in a living lab project.

Introduction

Innovation can be described as a five-step process that begins by identifying an opportunity, and culminating with the post-launch of a specific product or service (De Marez, 2006): i) opportunity identification; ii) concept design, development, and evaluation; iii) product design, development, and evaluation; iv) launch; and v) post-launch. However, the implied linear structure of this idealized process fails to convey the reality that user involvement may require multiple iterations or adjustments to a specific design than what is initially anticipated. This is especially true because it is difficult to accurately predict the future needs of users (Von Hippel, 1986). Indeed, innovation is an iterative process of need discovery – a pattern arising out of chaos – that is primarily visible in the front-end of design (Sanders & Stappers, 2012). This path from uncertainty to clarity was illustrated by Damien Newman’s “squiggle” (Figure 1) in the context of design, but its message holds equally well for the process of innovation.

Figure 1. Damien Newman’s “squiggle” representing the design process (CC-BYND)

Sanders and Stappers (2012) built upon “the squiggle” and concluded that focus in the design process will be accomplished by trial and error in discovering and fulfilling (future) user needs. Living lab projects accomplish this iterative process by involving users throughout the entire innovation cycle (Dell’Era & Landoni, 2014). Indeed, living labs are renowned for being multi-faceted phenomena embodying both open and user innovation (Coorevits et al., 2016). Their multi-method approach enables developers to take a much more granular approach to product development from inception to conclusion.

One key component found within the living labs methodology, namely the “in the wild” experimentation, provides detailed insights into a broad area of contextual elements that can influence user experience (Ballon & Schuurman, 2015; Følstad, 2008; Kjeldskov & Skov, 2014; Veeckman et al., 2013). Here, we refer to “the wild” as a synonym for the context of use and, more specifically, the uncontrollable aspects of real-life environments. Most living lab projects focus on the environmental aspect of use context such as the evaluation of a product in a familiar or real-life environment (Følstad, 2008). These familiar environments – such as a usability lab that looks like living room – raise some interesting questions, for example, regarding the degree of realism required to make an evaluation meaningful and ecologically valid or how these complex contextual requirements affecting user experience can be researched in the fuzzy front-end of design (Dell’Era & Landoni, 2014; Mulder & Stappers, 2009; Stewart & Williams, 2005). However, context research is about more than the environment and entails researching all the factors that influence the user experience of a product (Visser et al., 2005). Conducting this in the earlier phases of the innovation process can support the planning and decision-making process of a specific living lab project. Context research can, for example, provide insights into how to identify and select realistic contexts for the tasks at hand, but also how to recruit realistic participants for the selected contexts. This in turn will lead to higher ecological validity (Roto et al.,2011), which is one of the primary objectives of the living lab methodology.

According to Følstad’s (2008) literature review, half of all living labs are missing out on this opportunity because they do not research the use context before the testing phase takes place. The other half take a more ethnographic approach, which incorporates methods that appear oriented towards context research (Følstad, 2008). Contextual inquiry in the front-end of design includes methods that involve lead users (Von Hippel, 1986), generative design techniques (Sanders & Stappers, 2012), context mapping (Visser et al., 2005), and experience prototyping (Buchenau & Suri, 2000).

In other words, there are ample methods available that can measure or elicit context during the multiple phases of the innovation process, but they all define and describe it loosely. Mulders and Stappers (2009) and Dell’Era and Landoni (2014), for instance, emphasize the importance of contextualization via the previously mentioned methods, but they do not provide insights on the operationalization of context and more specifically how it can be measured during all the phases of a living lab project. Also, several researchers have emphasized the need for more guidance in the practicalities of researching context (Kaikkonen et al., 2005; Kjeldskov et al., 2004).

In this article, we will therefore first clarify the concept of context via a framework. Subsequently, we will describe the methodology of the project that we use as a case study to explore and explain the context dimensions and their properties selected from the literature. Next, we illustrate the context dimensions and properties with the case study project material and conclude with a reflection of its use for living lab research projects.

Context: A Multi-Layered Concept that Is More than Just the “Environment”

As previously mentioned, due to its inherent complexity, the concept of context should receive more attention. In previous work, published by Geerts and colleagues (2010), we pointed out three concerns with the concept of context: i) it is habitually treated as a container concept, with a vague definition encapsulating different aspects that influence use; ii) it is often conceptualized as something static, underestimating its dynamic nature and change during the use process; and iii) it is recurrently used post-hoc as an explanation for results while operationalization upfront is neglected. Therefore, we will focus on its dimensions and complexities, allowing living lab researchers to make more conscious research design decisions when studying context.

Several dimensions of context can be found in the field of human–computer interaction, which is relevant given that our living lab research mainly focuses on the digitization of products and services. Human–computer interaction is a field that has grown out of the traditions of information science, psychology, sociology, etc. and therefore brings a synthesis of insights to inspire living lab research with a focus on the interaction of people with digital products and services in the wild.

Dourish (2004) distinguishes two perspectives on context: representational and interactional. In the representational view, context is perceived as a set of environmental features surrounding generic activities. Dourish states that context in this view is a form of information, which is delineable and stable, and where it is possible to separate the context from the activity. In the interactional view, context arises from (inter)action, thus from the relationship between the user’s internal characteristics (e.g., motivation, intention, internalized societal values, goals) and the external characteristics (e.g., location, social aspects, technical components). Consequently, context cannot be treated as static information, but is a relational property arising out of an activity. This perspective is closely in line with the living lab methodology because it represents an approach for sensing, prototyping, validating, and refining complex solutions with end users (i.e., internal characteristics) in multiple and evolving real-life contexts (i.e., external characteristics). However, the operationalization and description of a dynamic context via relevant dimensions is challenging, and methodologies to measure these dynamics are rare or still in their infancy (Mulder et al., 2008).

We assert that a viable framework for living lab projects can be found in the work of Jumisko-Pyykkö and Vainio (2012) on the use context of mobile human–computer interaction. They refer to the ISO standard 13407 (ISO, 1999), which separated the user and system from the other components, but perceive context as something stable. Although it is better to treat context as a dynamic constant, we will start from Jumisko-Pyykkö and Vainio’s representational perspective as an analytical approach, separating the context components and observing it as external to the user and system. We will elicit the dimension of context via the iterative nature of living lab research. The limitations are comparable to making a time-lapse video with different pictures: the quality of the video depends on the number and quality of snapshots we can take. It is not possible to map every single factor of context, even in a simple real-world environment, but we can take snapshots from different perspectives, at various key moments, and bring them together in a more like a collage of snapshots that come nearer to telling the entire story (Hinton, 2014).

The different dimensions of use context following the work of Jumisko-Pyykkö and Vainio (2012) are: temporal, physical, technical/information, social, and task. Table 1 provides details and examples of all five dimensions, their definitions, and the properties. To emphasize the dynamic aspect of context we positioned the time dimension first in the list. The dimension “technical/informational context” overlaps with the physical context when dealing with the property of artefacts, but we agree with Jumisko-Pyykkö and Vainio (2012) that the additional category “technical/informational context” does not, in some cases, completely overlap with the physical context dimension, because not all digital solutions have a very tangible physical component. In non-technical innovation domains, this dimension representing the technical/information context can thus be redundant.

We suggest that using the framework with its different dimensions as a guideline for the planning phase of a living lab research project and iteratively applying it in the subsequent steps will provide more actionable, rich, and dynamic insights into the use context. In the following sections, we will illustrate this suggestion using a case study showing how to use these contextual dimensions.

Table 1. Dimensions of context of use (following Jumisko-Pyykkö & Vainio, 2012)

|

Dimension |

Definition |

Properties |

Examples |

|

Temporal context

|

“The user interaction with the system in relation with time” |

Duration |

Length of interaction, length of event |

|

|

Anytime, weekend, peak |

||

|

Before during and after |

Preparations, documenting, triggers |

||

|

Temporal tensions of actions |

Hurry, wait, rapid reaction |

||

|

Synchronous/asynchronous interaction |

Talking/texting |

||

|

Physical context

|

“The apparent features of a situation or physically sensed circumstances in which the user/system interaction takes place” |

Spatial location |

Geographical location, distance |

|

Functional place |

School, work |

||

|

Functional space |

Space for relaxation |

||

|

Sensed environmental attributes |

Light, weather, sound, haptic |

||

|

Movement/mobility |

Motion of user or environment |

||

|

Artefacts |

Physical object surrounding interaction |

||

|

Technical/ information context

|

“Relation to other services and systems relevant to the user’s system” |

Other systems and services |

Devices applications and networks |

|

Interoperability, informational artefacts and access |

Between devices, services, platforms |

||

|

Mixed reality systems |

|

||

|

Social context

|

“Other persons present, their characteristics and roles, the interpersonal interactions and the culture surrounding the user systems interaction” |

Other persons present |

Virtual, private/public; characteristics and roles with influence on user |

|

Interpersonal interaction, |

Turn taking, co-actions, collaboration, co-experience |

||

|

Culture |

Values norms and attitudes (e.g., a culture of uncertainty avoidance) |

||

|

Task context

|

“The tasks surrounding the user interaction with the system” |

Multitasking |

Multiple tasks priority depends on goals, primary vs secondary tasks |

|

Interruptions |

Interaction interrupted (e.g., by a technical problem) |

||

|

Task domain |

Goal oriented (effectiveness, efficiency) vs action/process itself (entertainment) |

Method and Case Study Description

Given the exploratory nature of this research, this article describes a single case study using participatory action research. Action research is particularly relevant when producing guidelines for best practice (Sein et al., 2011). Yin (2009) defines the case study research method as “an empirical inquiry that investigates a contemporary phenomenon within its real-life context; when the boundaries between phenomenon and context are not clearly evident; and in which multiple sources of evidence are used”.

The goal of the research was to understand how context could be studied within living lab research projects. Because testing a new framework should be done iteratively to come to a middle-range, theory-like approach – a theorizing approach aimed an integrating theory and empirical data – a case study is an appropriate research tool for exploring key variables and their relationships (Eisenhardt, 1989; Yin, 2009). The purpose of the project was to develop an application that can assist employees in developing and maintaining soft skills such as empowerment after receiving coaching. The living lab project’s starting point is situated at the front-end of design and took place over the course of one year, starting in January 2014 and running until February 2015. The partners in this project were: i) an SME that provides coaching to companies and came up with the idea of the application, ii) a large organization that provided access to its physical facilities, iii) staff (e.g., human resources, information technology) and primary users (employees), iv) and iMinds (now imec), a research institute with extensive experience in managing living lab research projects.

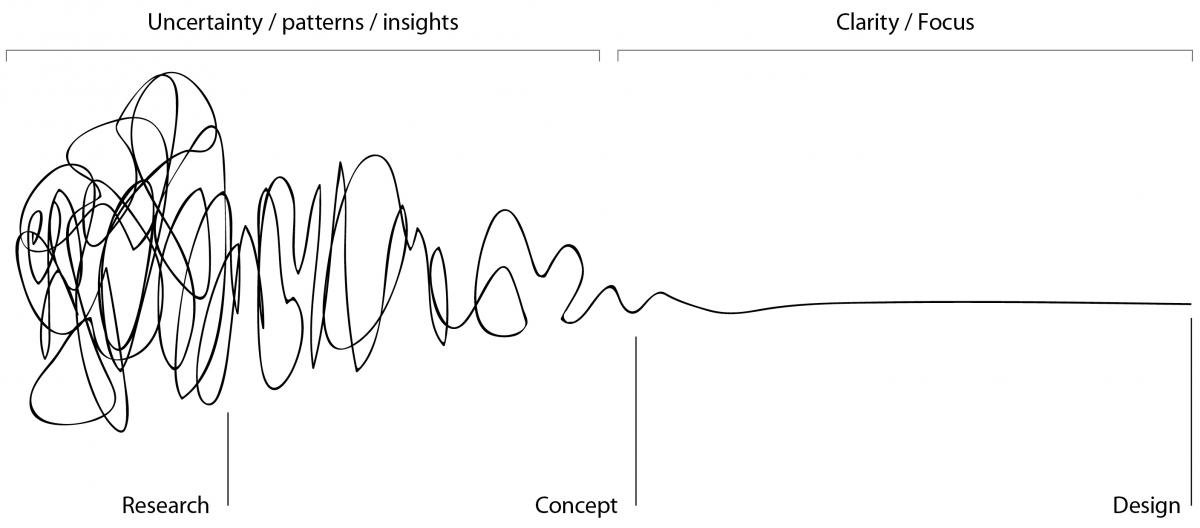

The general research structure implemented by iMinds Living Labs (now imec.livinglabs) combines the innovation process flow created by De Marez (2006), described earlier, with the design squiggle explained by Daniel Newman (2006) in Figure 1. The flow is iterative in nature because user input should be implemented throughout the entire innovation process and allows for optimization and modifications of the specific product. We follow Sanders and Stappers (2012) in their reasoning that a project should entail different approaches to move the innovation forward: i) exploring or understanding; ii) generating or making; and iii) evaluating. We depict this research flow for our particular case in Figure 2 and describe each phase and numbered step in further detail below.

Figure 2. The research flow of the living lab case from idea to concept to prototype to minimum viable product (MVP)

The project started from the initial idea that employees need more support (via an application) to develop and maintain soft skills on the job. Then, the research flow described in Figure 2 was followed. In Phase 1 (from Idea to Concept) in order to better understand the innovation, insights were gathered from a range of modern technologies supporting behavioural change within organizations. Additionally, existing literature on behavioural change, technology adoption, and gamification (in organizations) was reviewed (Step 1). Based on these factors, a low-fidelity prototype was developed in the form of a paper mock-up (Step 2). In a following step (Step 3), a matrix was developed to invite different employees to participate in interviews. Coaches, the individuals being coached, and human resources personnel of large organizations were invited to provide input on the use context and the low-fidelity prototype developed in Step 2. Nine interviews (with a duration of two hours per interview) took place with different stakeholders to gain insights in the current way of coaching and behavioural change in the organization. The interviews were conducted in a meeting room of the organization and reflected on the use of the application in that organization, so the contextual dimensions were implicitly and explicitly included. A first introduction to the mock-up happened towards the end of the interview (Steps 3 & 4). In Phase 2 (from Concept to Prototype), the designer made wireframes in the form of a clickable mock-up for the application based on the first insights from Phase 1 (Step 5). These wireframes were evaluated and further co-designed with six potential end users of the application: three coaches and three individuals being coached. This co-design activity took place through one-on-one sessions of approximately 1 hour per potential end user in a meeting room of the organization (Steps 6 & 7). Based on the input of these potential end users, the wireframes were further optimized in Phase 3 (from Prototype to MVP) by the designer (Steps 7 & 8) and used as input for the implementation phase. or “Wizard of Oz assessment” – a technique that is used to evaluate an unimplemented technology by using an unseen human (i.e., the researcher plays the role of a hidden “wizard”) to simulate the responses of a proposed system (Step 8). For this third phase, the appropriate technology to replicate the application was selected, namely Qualtrics (a survey application) and Panelkit (an e-mail management application). An invitation was sent to people that recently received a coaching session (n=20) asking them to attend a kick-off event of the testing phase. During the kick-off event, the goal of the test was explained and the process was described. Twelve people attended the kick-off event and initiated the testing phase. Finally, before creating an MVP, we invited people during and after the testing period to share their opinion on the testing phase via different qualitative research methods (i.e., a feedback form, online post-surveys with mainly open-ended questions and interviews) (Step 9) and to ensure the participative design process (Step 10).

During the living lab project, the participants were observed, conversations were recorded, and notes were taken by the researchers. The results were a priori coded using Table 1.

Results: Applying the Contextual Dimensions and their Properties to a Living Lab Case

Analyzing context via the framework provided us with a strong indication of how the technology would be used in the professional lives of the users and what the required features should be to enhance product–user interaction in that context. Without focusing on the different elements of context, certain critical features would not have been exposed, potentially resulting in failure of the technology (e.g., the requested name change from “coach” to “buddy” in the application) Because the application was not developed at the time of the test phase, the company was able to integrate any feedback iteratively and change the concept accordingly.

Table 2 shows the insights the researchers gathered while focusing on context during the different phases of the research flow. In each phase, we illustrate our insights per context-of-use dimension (temporal, physical, technical/information, social, and task) and its accompanying properties (e.g., duration, temporal tensions) as defined in Table 1. Only the properties for which we gained relevant insights for product development are discussed in Table 2. This means some properties might not be included compared to Table 1. This confirms the time-lapse video metaphor, which emphasizes the importance of gathering different perspectives, but also the difficulty of creating a full perspective on context.

Table 2. Properties of each context-of-use dimension across the phases of the living lab project

|

Dimension |

Phase 1: |

Phase 2: |

Phase 3: |

|

Temporal Context

|

Duration: Time between evaluation less than 2 weeks preferably weekly |

Duration: Evaluations should be as soon as possible/immediate after a training moment |

Duration: One week time between evaluations too short |

|

|

Temporal tensions of actions: Easy re-entry point: what if I drop out? |

Temporal tensions of actions: What if meeting is unexpectedly cancelled, can I reschedule my training moment |

Before, during, after: Insights in availability of buddy during meetings is necessary to know before choosing who will be buddy |

|

Before, during, after: Useful having something to remind you from time to time to work on habit change |

|

Syn-/asynchronous interactions: Unable to start app, when requested buddy delays to reply |

|

|

Before, during, after/duration: When having a free moment (e.g. on your way home) an extra trigger is needed: “time for reflection” |

|

Before, during, after: More triggers needed, reminder is not enough to stimulate behavioural change |

|

|

|

|

Before, during, after: When drop in motivation to change behaviour over time, system needs to spark motivation |

|

|

Physical Context |

Functional places: Interview in the workplace |

Functional places: Session in the workplace, in meeting room |

Functional places: Test, Interview and survey in the workplace |

|

|

Functional space: It’s use is in a professional environment and thus game elements are not appropriate |

Functional space: The initial wireframes are still too playful, more professional look and feel necessary for their big corporate environment |

Functional space: The proposed prototype took the professional space too much into account |

|

Spatial location: Physical proximity of coach is necessary |

|

Movement/mobility: If you are offside you can’t access your professional mail address, which reminds you of the training moments |

|

|

Technical/ Information Context

|

Interoperability, informational artefacts and access: The organization blocks access to certain websites, applications, … e.g. personal e-mail |

Other systems and services: There are certain places in the buildings where you cannot access the wifi or 3/4G? |

Interoperability, informational artefacts and access: The security infrastructure of the organization blocks any non integrated application |

|

|

|

Other systems and services: If I am on the move (going from one meeting to the next) I do not always have access to my emails and cannot receive/provide feedback |

|

|

Social Context |

Interpersonal interaction: face to face interaction is preferred |

Culture: The word “coach” refers to the company’s hierarchy |

Culture: Buddy is “too sweet”, because giving personal feedback is not part of corporate culture |

|

|

Other persons present: Chosen coach needs to be already present in your activities (e.g. meetings) |

Interpersonal interaction: It is important to choose your own coach (buddy) as someone you trust that can provide feedback in a safe environment |

Other persons present: The habit you want to change is not always observable by the coach. |

|

Culture: Being asked to become someone’s coach is perceived as an honour |

|

Other persons present/interpersonal interaction: The coach needs to perform two roles: witnessing the behaviour and motivating. One or more persons can take on these roles. |

|

|

Culture: Autonomy is highly valued for example choosing your own training moments, coach, ... |

|

Other persons present/interpersonal interaction: People experience difficulties to define their habits correctly. They need other their buddy to guide them in the process such as choosing an observable habit, defining the right steps to get there,... |

|

|

Task Context

|

Multitasking: High level of multitasking, work priorities make difficult to focus on soft skills |

Interruptions/multitasking: The timing of reminders should not interrupt an ongoing task flow (ok after meeting, but not when at work at desk) |

Interruptions/multitasking: It is difficult to combine being active in a meeting and observing one’s behaviour, when not being experienced in observation techniques. |

|

Multitasking: Link to own calendar is needed to integrate behaviour change in between or during appropriate work tasks |

|

Task domain: Not every type of meeting is appropriate, ability to choose a good meeting to make first attempt of small step improvement of one’s behaviour |

Because of the multi-method and iterative approach in living lab projects, temporal context is intuitively integrated in the research process because the user–system interaction is studied over time. However, Table 2 shows that the temporal context dimension should be made more explicit to detect nuance and added value for the iterative approach. For example, in Phase 1, the employees perceived the suggested time of two weeks in between evaluations as too long. In Phase 3, the weekly time intervals provided for evaluation were perceived as too short. The participants were able to make a more accurate estimation because remaining contextual dimensions enriched the simulation of the future experience, and thus the perception of the ideal duration. By focusing on time more explicitly, researchers can much more easily identify components that otherwise would be overlooked, and they can focus on multiple components that appear simultaneously.

The physical context dimension guided our research design to operationalize context (Table 2). We purposefully held all research activities in the functional place for which the application was designed: an office. Throughout the different design phases, taking into account the user’s concerns and feedback on the appropriateness of the application for their functional space is an iterative process, through which we seek the perfect balance between being work-appropriate and entertaining, fun, and engaging. The artefact component of the physical context is not used in this analysis given that the project is oriented towards a mobile service, which consists of virtual and physical aspects. They are discussed further in the technical/ information context dimensions. There is still room for improvement in defining the components of the more technical/information context.

With the social context components, one can see the three layers of the Mantovani (1996) model: culture for the social-cultural (i.e., the other individuals present as a proxy for the situational level) and interpersonal interaction for aspects that entail more micro-interactions. We observed that culture is easier to extrapolate from interviews than reflections based on experiences in daily life, which are necessary to prompt aspects of interpersonal interactions on a more granular level. Therefore, both approaches are needed in order to elicit the multiple aspects of social context.

As is the case with temporal context, particular attention must be given to the subject of task context, which is a critical component of user experience research. In each step of the living lab project, there is a focus on the tasks and actions that users will fulfill to reach the goal of the application, in this case, behavioural change. In the wireframe session, the researchers assumed a given flow of tasks being executed by the users, which made it less likely that new contextual task components would be discovered. The session focused more on validating previous task context components. The danger when focusing too hard on this task component is that other components of context are easily neglected.

When a researcher or practitioner is confronted with contradictory findings using this framework in different living lab research steps, they must assess the results critically by looking at the methods of data collection, respondent validation, and analysis. Triangulation of results produced by multiple researchers can provide new insights and strengthen the quality of those findings. This triangulation highlights new perspectives that are supplementary and it enables researchers to dispute contradictory insights gathered from other researchers. The purpose of triangulating the data is to increase the understanding of a complex phenomenon, not to determine consensus nor to validate any specific results. Additionally, it is important to take a timelapse-based approach because it helps identify incompatibilities that will allow a more fundamental grasp of the data. The analysis can include looking for both consistencies and inconsistencies and, eventually, identifying patterns. Both researchers and practitioners must be prepared to question findings and interpretations and assess both the internal and external validity of the data. As with all context research, it is especially critical to be aware of potential biases and other factors that may influence the insights in the case (Malterud, 2001).

Conclusion and Managerial Implications

In this article, we defined and decomposed the container concept of context into various dimensions and properties. This structural approach allowed us to research the everyday life context of a living lab project. Although we implemented the framework retroactively, we were able to determine that it is feasible to detect the different dimensions and properties of context at any stage of the innovation process. The dimensions can be used, for example, as sensitizing concepts (Bowen, 2008). Our research further indicates that contextual input varies depending on the research method being used. This finding not only emphasizes the importance of a multi-method approach in living lab projects, it also highlights the necessity of focusing on use context during every step of the design process. In Phase 2, we only focused on a single dimension of context: the task context. However, participants still provided relevant input on the other dimensions as well. A first aspect was their vision on gamification, which evolved over time. We were only able to capture this aspect because the participants voluntarily mentioned it; it would not have been detected otherwise. This finding indicates that the framework can help researchers and practitioners to capture other contextual aspects that might influence the user experience if they are focusing too much on one dimension. It also shows that researchers should constantly keep open minds so that they are better able to detect new or additional dimensions. Additionally, it indicates that a single research step is never enough because context is dynamic and evolves over time. Timelapsing and multiple methods such as different prototypes, contextual observation, user testing, and participatory design can all bring important perspectives to complete the picture and should be considered to improve the outcomes of living lab projects.

The framework contributed to the analysis phase of the living lab project, independent of the maturity of the innovation. However, this approach to structuring context is also helpful in the design and execution of the research flow where different cycles of “understand – make – evaluate” will be executed. The model allows for a systematic and reflective process in the development of knowledge related to context. For example, spontaneous dimensions mentioned by interviewees (e.g., “I don’t want a coach, I want a buddy”) can indicate their priority, but making a list of different dimensions and their properties in the interview topic guide can guide the search for more contextual elements (e.g., other artefacts that can support behavioural change such a sticker on the user’s computer that serves as a reminder to work on their soft skills).

The framework helps assist both researchers and practitioners to structure their approach, but it does not necessarily imply that all properties of those dimensions need to be found. The researcher can, for example, choose to solely focus on specific elements of context based on previous research indicating the importance of these elements. Additionally, dimensions of context, for example, temporal and place can be present in the same example, but that is a normal consequence of the multidimensionality of context. All components can influence each other. For example, the property “task interruptions” in a meeting is also influenced by the properties of the social dimension (other people present and their role in the meeting) and temporal dimension (availability of the buddy during meeting). The difficulties experienced when decomposing context make us more aware of the interrelationships between the different dimensions and their properties, which is an interesting analytical insight. The decomposition process of different dimensions into several properties was originally developed for mobile applications and as such might need improvement if applied in other digital and innovation domains.

Our framework further contributes to bridging the gap in the literature regarding the lack of a clear methodological approach for living lab projects because it provides a more unified approach of measuring context. The structure can increase the impact of living lab projects, for example, by gathering more actionable user insights, and it can serve as a starting point to further refine this methodology. Furthermore, the implementation of the framework will either enhance the ecological validity of a living lab project or the extent of its practical validity within the innovation process. In particular, because researchers do not have to rely only on observable phenomena or what is casually mentioned by participants, they will be able to search for all relevant dimensions of context that might influence the user experience.

If innovation managers only focus on a single aspect of user research, they can only expect a limited overview on the context of use. In order to gain a more thorough, 360-degree overview, they need to implement an iterative research path whereby the framework can help them focus on varying dimensions of context and sufficiently balance the cost and quality of the output.

In conclusion, this article provides a way to take context into consideration in living lab research by describing and applying a framework that helps to structure all the different dimensions and properties of context. The framework can reduce the experienced challenges to introduce “the wild” into living lab projects by focusing – in a more structured way – on the dynamic relationships of people and activities in real life.

References

Ballon, P., & Schuurman, D. 2015. Living Labs: Concepts, Tools and Cases. Info, 17(4): info-04-2015-0024.

Bowen, G. A. 2008. Grounded Theory and Sensitizing Concepts. International Journal of Qualitative Methods, 5(3): 12–23.

Buchenau, M., & Suri, J. F. 2000. Experience Prototyping. In Proceedings of the 3rd Conference on Designing Interactive Systems: Processes, Practices, Methods, and Techniques: 424–433. New York: ACM Press.

https://doi.org/10.1145/347642.347802

Coorevits, L., Schuurman, D., Oelbrandt, K., & Logghe, S. 2016. Bringing Personas to Life: User Experience Design through Interactive Coupled Open Innovation. Persona Studies, 2(1): 97–114.

http://doi.org/10.21153/ps2016vol2no1art534

De Marez, L. 2006. Diffusie van ICT-innovaties: accurater gebruikersinzicht voor betere introductiestrategieën.

https://biblio.ugent.be/publication/470186/file/1880801.pdf

Dell’Era, C., & Landoni, P. 2014. Living Lab: A Methodology between User-Centred Design and Participatory Design. Creativity and Innovation Management, 23(2): 137–154.

http://dx.doi.org/10.1111/caim.12061

Dourish, P. 2004. What We Talk About When We Talk About Context. Personal and Ubiquitous Computing, 8(1): 19–30.

https://doi.org/10.1007/s00779-003-0253-8

Eisenhardt, K. 1989. Building Theories from Case Study Research. Academy of Management Review, 14(4): 532–550.

http://www.jstor.org/stable/258557

Følstad, A. F. 2008. Living Labs for Innovation and Development of Information and Communication Technology: A Literature Review. Electronic Journal for Virtual Organizations and Networks, 10(August): 99.

Geerts, D., De Moor, K., Ketykó, I., Jacobs, A., Van den Bergh, J., Joseph, W., Martens, L., & De Marez, L. 2010. Linking an Integrated Framework with Appropriate Methods for Measuring QoE. In Proceedings of the 2nd International Workshop on Quality of Multimedia Experience (QoMEX 2010): 158–163.

https://doi.org/10.1109/QOMEX.2010.5516292

Hinton, A. 2014. Understanding Context: Environment, Language, and Information Architecture. Cambridge: O’Reilly Media.

ISO. 1999. ISO 13407: 1999 – Human-Centred Design Processes for Interactive Systems. Geneva: International Organization for Standardization (ISO).

http://www.iso.org/iso/catalogue_detail.htm?csnumber=21197

Jumisko-Pyykkö, S., & Vainio, T. 2012. Framing the Context of Use for Mobile HCI. In J. Lumsden (Ed.), Social and Organizational Impacts of Emerging Mobile Devices: Evaluating Use, 2: 1–28. Hershey, PA: Information Science Reference.

Kaikkonen, A., Kekäläinen, A., Cankar, M., Kallio, T., & Kankainen, A. 2005. Usability Testing of Mobile Applications: A Comparison between Laboratory and Field Testing. Journal of Usability Studies, 1(1): 4–16.

Kjeldskov, J., & Skov, M. B. 2014. Was It Worth the Hassle? Ten Years of Mobile HCI Research Discussions on Lab and Field Evaluations. In Proceedings of the 16th International Conference on Human-Computer Interaction with Mobile Devices & Services (MobileHCI ’14): 43–52.

http://dx.doi.org/10.1145/2628363.2628398

Kjeldskov, J., Skov, M. B., Als, B. S., & Høegh, R. T. 2004. Is It Worth the Hassle? Exploring the Added Value of Evaluating the Usability of Context-Aware Mobile Systems in the Field. In Proceedings of the 6th International Symposium on Mobile Human-Computer Interaction: 61–73.

http://dx.doi.org/10.1007/978-3-540-28637-0_6

Malterud, K. 2001. Qualitative Research: Standards, Challenges, and Guidelines. The Lancet, 358(9280): 483–488.

http://dx.doi.org/10.1016/S0140-6736(01)05627-6

Mantovani, G. 1996. Social Context in HCl: A New Framework for Mental Models, Cooperation, and Communication. Cognitive Science, 20(2): 237–269.

http://dx.doi.org/10.1207/s15516709cog2002_3

Mulder, I., & Stappers, P. J. 2009. Co-Creating in Practice: Results and Challenges. Paper presented at the 2009 IEEE International Technology Management Conference (ICE), June 22–24.

https://doi.org/10.1109/ITMC.2009.7461369

Mulder, I., Velthausz, D., & Kriens, M. 2008. The Living Labs Harmonization Cube: Communicating Living Lab’s Essentials. The Electronic Journal for Virtual Organization & Networks, 10(November): 1–14.

Roto, V., Väätäjä, H., Jumisko-Pyykkö, S., & Väänänen-Vainio-Mattila, K. 2011. Best Practices for Capturing Context in User Experience Studies in the Wild. In Proceedings of the 15th International Academic MindTrek Conference on Envisioning Future Media Environments (MindTrek ‘11): 91–98.

https://doi.org/10.1145/2181037.2181054

Sanders, E. B.-N., & Stappers, P. J. 2012. Convivial Toolbox: Generative Research for the Front End of Design. Amsterdam: BIS.

Sein, M. K., Henfridsson, O., Rossi, M., & Lindgren, R. 2011. Action Design Research. MIS Quarterly, 35: 37–56.

Stewart, J., & Williams, R. 2005. The Wrong Trousers? Beyond the Design Fallacy: Social Learning and the User. In Rohracher, H. (Ed.), User Involvement in Innovation Processes: Strategies and Limitations from a Socio-Technical Perspective. Munich: Profil-Verlag.

Veeckman, C., Schuurman, D., Leminen, S., & Westerlund, M. 2013. Linking Living Lab Characteristics and Their Outcomes: Towards a Conceptual Framework. Technology Innovation Management Review, 3(12): 6–15.

http://timreview.ca/article/748

Visser, F. S., Stappers, P. J., van der Lugt, R., & Sanders, E. B.-N. 2005. Contextmapping: Experiences from Practice. CoDesign, 1(2): 119–149.

http://dx.doi.org/10.1080/15710880500135987

Von Hippel, E. 1986. Lead Users: A Source of Novel Product Concepts. Management Science, 32(7): 791–805.

http://dx.doi.org/10.1287/mnsc.32.7.791

Yin, R. 2009. Case Study Research: Design and Methods. Beverly Hills, CA: Sage Publications.

Keywords: context, innovation process, Living lab, real-life